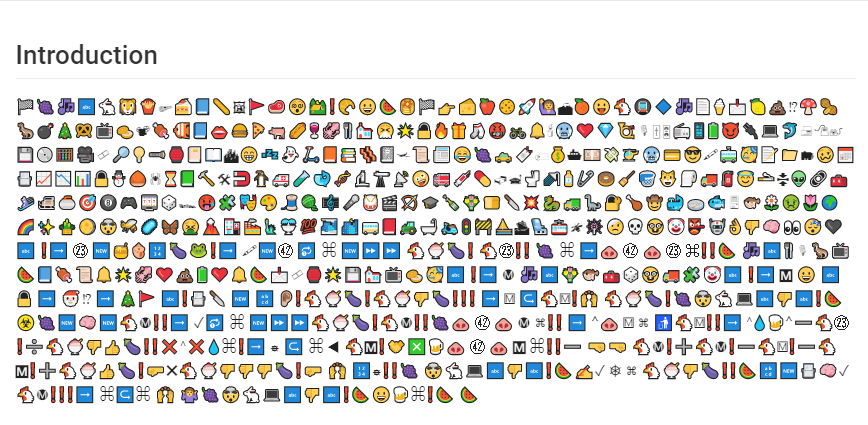

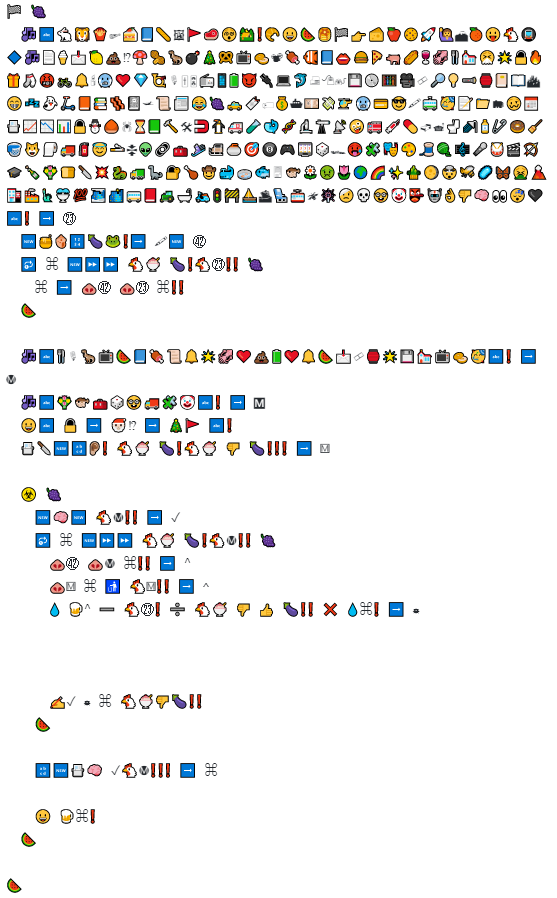

I immediately recognized this as emojiCode. The EmojiCode debugger has an option to prettify the code, which helped a lot.

As the documentation isn’t that good, I had to google for most of the emojis to find out what’s going on. Basically, the program takes some user input. Then it runs over an existing string, takes the character from the user input at position index modulo the length of user input. Uses the current character from the string, shifts it, and xors this with the user input. I wrote a small python script recreating this behaviour.

values = [56,72,39,43,15,48,46,106,66,59,55,69,35,77,69,66,15,33,89,93,59,85,57,43,44,123] inpu = "🔑" b = [] for x in inpu: c = x.encode() for y in c: b.append(y) str1 = "" str2 = "" str3 = "" for i in range(26): x = b[i % len(b)] if x > 128: x -= 256 u = values[i] - 256 // 2 ^ i str1 += str(hex(u)) str2 += hex(x) u ^= x str3 += chr(u) print(str1) print(str2) print(str3)

As the flag always starts with HV19 we can use that information to calculate the XOR key which is -0x10, -0x61, -0x6c, -0x6f. After applying the shift +256 we get 0xf0 0x9f 0x94 0x91. Which is the UTF8 hex representation for the key (🔑) emoji.

Using 🔑 as the XOR key returns the flag:

HV19{*<|:-)____\o/____;-D}

Hi,

Congrats! One of the few (judging on the write-ups I read) who actually reversed the emojicode, and not just tried all emojis by brute force ;-)

Perhaps I should have used a more complex “key”…

Happy Hacking,

M.

Hi, thanks for your comment!

I actually spent like five hours looking at emojis and trying to understand what it does. :D

Have a nice day,

Sebastian!